We've worked with dozens of teams building LLM agents across industries. Consistently, the most successful implementations use simple, composable patterns rather than complex frameworks.

我们与数十个跨行业的团队合作开发大语言模型(LLM)智能体。始终如一地,最成功的实现方案都采用了简单、可组合的模式,而非复杂的框架。

Over the past year, we've worked with dozens of teams building large language model (LLM) agents across industries. Consistently, the most successful implementations weren't using complex frameworks or specialized libraries. Instead, they were building with simple, composable patterns.

在过去一年中,我们与数十个跨行业的团队合作构建大语言模型(LLM)智能体。那些最成功的实现方案始终没有依赖复杂的框架或专用库,而是采用简单、可组合的模式进行构建。

In this post, we share what we’ve learned from working with our customers and building agents ourselves, and give practical advice for developers on building effective agents.

在本文中,我们将分享从客户合作及自身智能体开发实践中获得的经验,并为开发者提供构建高效智能体的实用建议。

What are agents?(什么是智能体?)

"Agent" can be defined in several ways. Some customers define agents as fully autonomous systems that operate independently over extended periods, using various tools to accomplish complex tasks. Others use the term to describe more prescriptive implementations that follow predefined workflows. At Anthropic, we categorize all these variations as agentic systems, but draw an important architectural distinction between workflows and agents:

Workflows are systems where LLMs and tools are orchestrated through predefined code paths.

Agents, on the other hand, are systems where LLMs dynamically direct their own processes and tool usage, maintaining control over how they accomplish tasks.

“智能体”可以有多种定义。一些客户将其定义为能够长期独立运行、利用各种工具完成复杂任务的完全自主系统;另一些客户则用该术语描述遵循预定义工作流的、更具指令性的实现方式。在 Anthropic,我们将这些不同形式统称为“智能体系统”,但在架构上对“工作流”和“智能体”做出重要区分:

• 工作流是指通过预定义代码路径协调大语言模型与工具的系统。

• 智能体则是指由大语言模型动态主导自身流程与工具使用、自主掌控任务执行方式的系统。

Below, we will explore both types of agentic systems in detail. In Appendix 1 (“Agents in Practice”), we describe two domains where customers have found particular value in using these kinds of systems.

下文将详细探讨这两类智能体系统。在附录1(《实践中的智能体》)中,我们描述了两个客户在其中显著受益于这类系统的应用领域。

When (and when not) to use agents(何时(以及何时不)使用智能体)

When building applications with LLMs, we recommend finding the simplest solution possible, and only increasing complexity when needed. This might mean not building agentic systems at all. Agentic systems often trade latency and cost for better task performance, and you should consider when this tradeoff makes sense.

在使用大语言模型构建应用时,我们建议尽可能采用最简单的解决方案,仅在必要时才增加复杂性。这意味着有时甚至完全不需要构建智能体系统。智能体系统通常以更高的延迟和成本换取更优的任务表现,因此你应仔细评估这种权衡是否值得。

When more complexity is warranted, workflows offer predictability and consistency for well-defined tasks, whereas agents are the better option when flexibility and model-driven decision-making are needed at scale. For many applications, however, optimizing single LLM calls with retrieval and in-context examples is usually enough.

当确实需要更高复杂度时,对于定义明确的任务,工作流能提供可预测性和一致性;而当需要大规模的灵活性和模型驱动的决策能力时,智能体则是更优选择。然而,对许多应用场景而言,通过检索增强和上下文示例优化单次大语言模型调用通常已足够。

When and how to use frameworks(何时以及如何使用框架)

There are many frameworks that make agentic systems easier to implement, including:

The Claude Agent SDK;

Rivet, a drag and drop GUI LLM workflow builder; and

Vellum, another GUI tool for building and testing complex workflows.

目前有许多框架可简化智能体系统的实现,包括:

• Claude 智能体 SDK;

• AWS 的 Strands Agents SDK;

• Rivet,一款支持拖拽操作的图形化 LLM 工作流构建工具;

• Vellum,另一款用于构建和测试复杂工作流的图形化工具。

These frameworks make it easy to get started by simplifying standard low-level tasks like calling LLMs, defining and parsing tools, and chaining calls together. However, they often create extra layers of abstraction that can obscure the underlying prompts and responses, making them harder to debug. They can also make it tempting to add complexity when a simpler setup would suffice.

这些框架通过简化调用 LLM、定义与解析工具、串联调用等标准底层任务,帮助开发者快速上手。但它们往往引入额外的抽象层,可能掩盖底层提示词(prompt)与响应,使调试变得更加困难。此外,它们还容易诱使开发者在简单方案已足够的情况下过度增加系统复杂度。

We suggest that developers start by using LLM APIs directly: many patterns can be implemented in a few lines of code. If you do use a framework, ensure you understand the underlying code. Incorrect assumptions about what's under the hood are a common source of customer error.

我们建议开发者先直接使用 LLM API:许多模式只需几行代码即可实现。如果确实使用框架,请务必理解其底层代码逻辑。对框架内部机制做出错误假设,是客户常见问题的重要根源。

See our cookbook for some sample implementations.

有关具体实现示例,请参阅我们的《实践手册》(cookbook)。

Building blocks, workflows, and agents(构建模块、工作流与智能体)

In this section, we’ll explore the common patterns for agentic systems we’ve seen in production. We'll start with our foundational building block—the augmented LLM—and progressively increase complexity, from simple compositional workflows to autonomous agents.

本节将探讨我们在生产环境中观察到的智能体系统常见模式。我们将从基础构建模块——增强型 LLM 出发,逐步提升复杂度,从简单的组合式工作流过渡到自主智能体。Building block: The augmented LLM(构建模块:增强型 LLM)

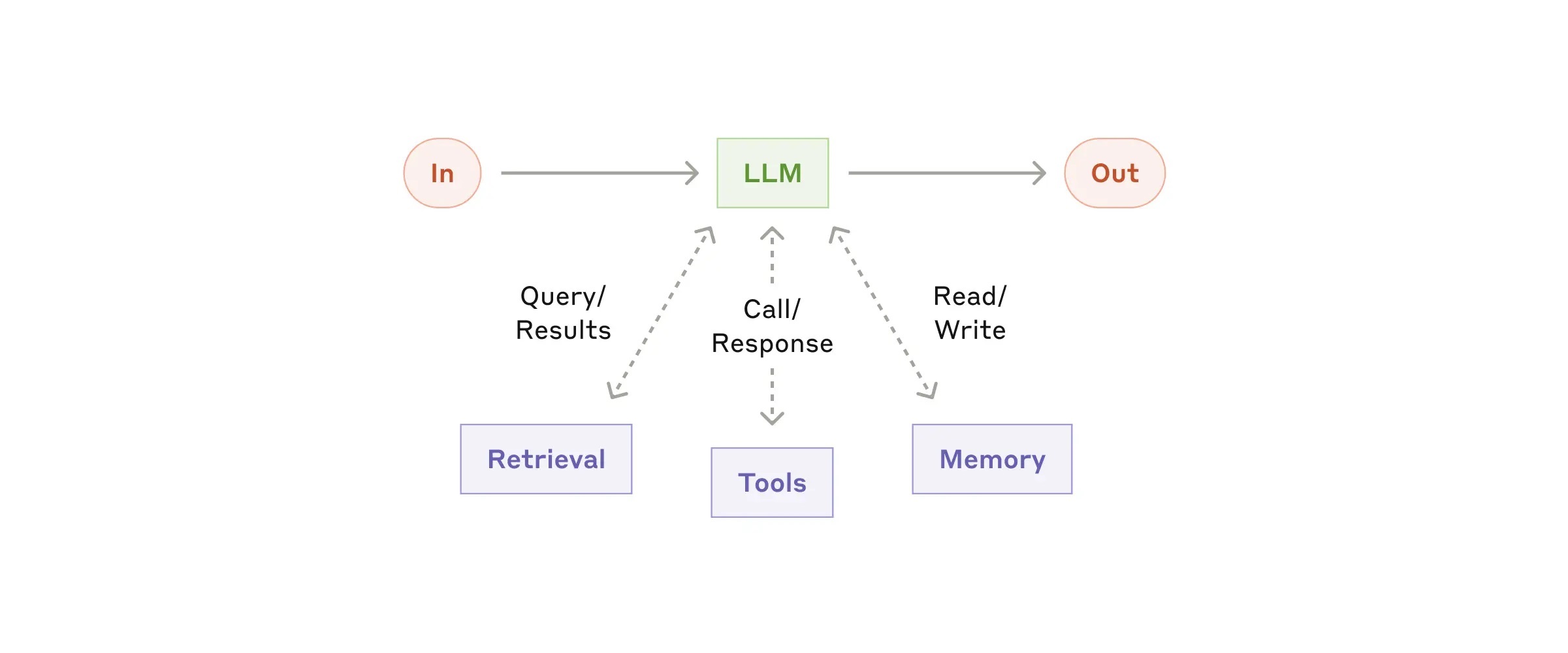

The basic building block of agentic systems is an LLM enhanced with augmentations such as retrieval, tools, and memory. Our current models can actively use these capabilities—generating their own search queries, selecting appropriate tools, and determining what information to retain.

智能体系统的基本构建模块是一种经过增强的大语言模型,其增强能力包括检索、工具调用和记忆功能。我们当前的模型能够主动运用这些能力——自行生成搜索查询、选择合适的工具,并决定保留哪些信息。

The augmented LLM(增强型 LLM)

We recommend focusing on two key aspects of the implementation: tailoring these capabilities to your specific use case and ensuring they provide an easy, well-documented interface for your LLM. While there are many ways to implement these augmentations, one approach is through our recently released Model Context Protocol, which allows developers to integrate with a growing ecosystem of third-party tools with a simple client implementation.

我们建议重点关注实现中的两个关键方面:一是根据你的具体用例定制这些能力,二是确保它们为你的大语言模型提供一个简单且文档完善的接口。尽管实现这些增强功能的方式多种多样,但其中一种方法是通过我们近期发布的“模型上下文协议”(Model Context Protocol),该协议允许开发者通过简单的客户端实现,与不断扩展的第三方工具生态系统集成。

For the remainder of this post, we'll assume each LLM call has access to these augmented capabilities.

在本文后续部分,我们将默认每次大语言模型调用均可访问上述增强能力。

Workflow: Prompt chaining(工作流:提示词链式调用(Prompt Chaining))

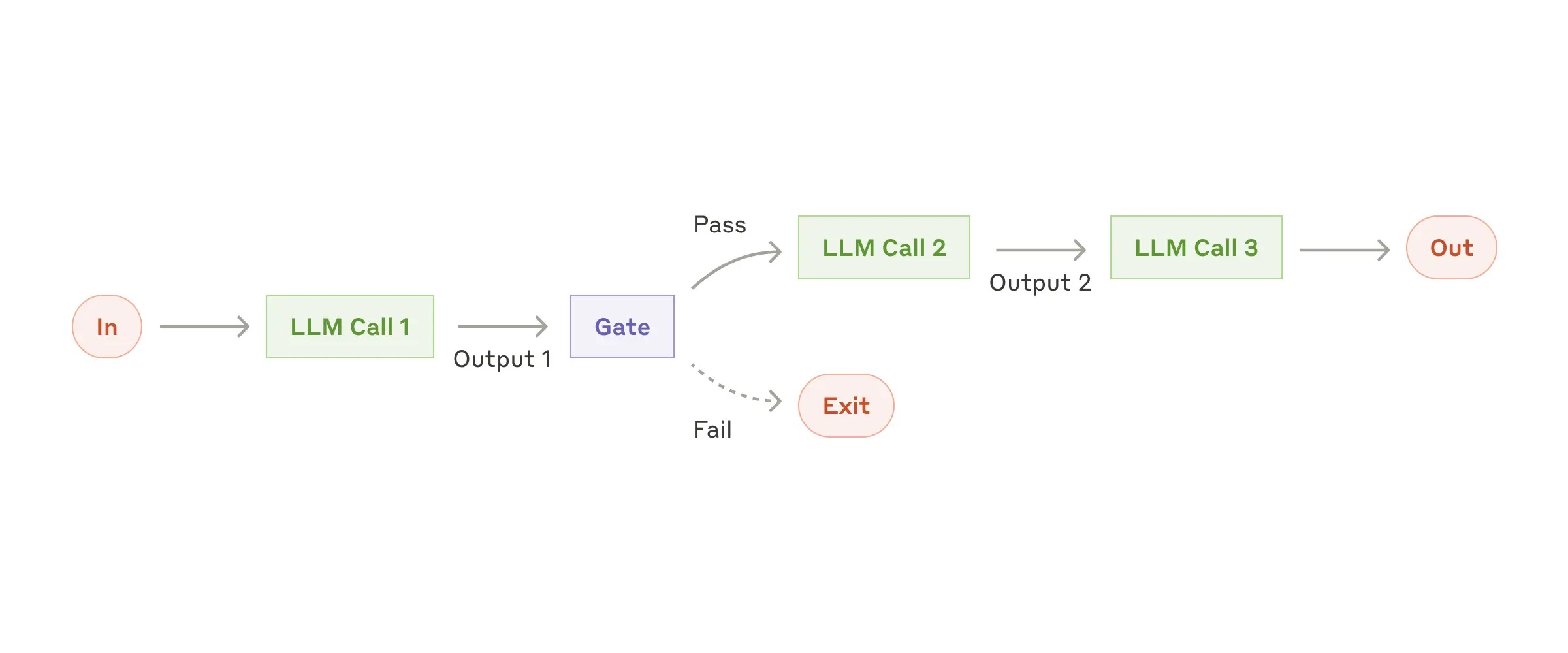

Prompt chaining decomposes a task into a sequence of steps, where each LLM call processes the output of the previous one. You can add programmatic checks (see "gate” in the diagram below) on any intermediate steps to ensure that the process is still on track.

提示词链式调用将一项任务拆解为一系列步骤,其中每次大语言模型调用都处理前一次调用的输出结果。你可以在任意中间步骤加入程序化检查(见下图中的“门控”/“gate”),以确保整个流程仍在正确轨道上运行。

When to use this workflow: This workflow is ideal for situations where the task can be easily and cleanly decomposed into fixed subtasks. The main goal is to trade off latency for higher accuracy, by making each LLM call an easier task.

何时使用此工作流:当任务可以轻松且清晰地拆分为固定的子任务时,这种工作流尤为理想。其主要目标是通过让每次大语言模型调用处理更简单的任务,以牺牲一定延迟为代价换取更高的准确性。

Examples where prompt chaining is useful:

Generating Marketing copy, then translating it into a different language.

Writing an outline of a document, checking that the outline meets certain criteria, then writing the document based on the outline.

提示词链式调用的典型应用场景包括:

先生成营销文案,再将其翻译成另一种语言;

先撰写文档提纲,检查提纲是否满足特定标准,再基于该提纲撰写完整文档。

Workflow: Routing(工作流:路由(Routing))

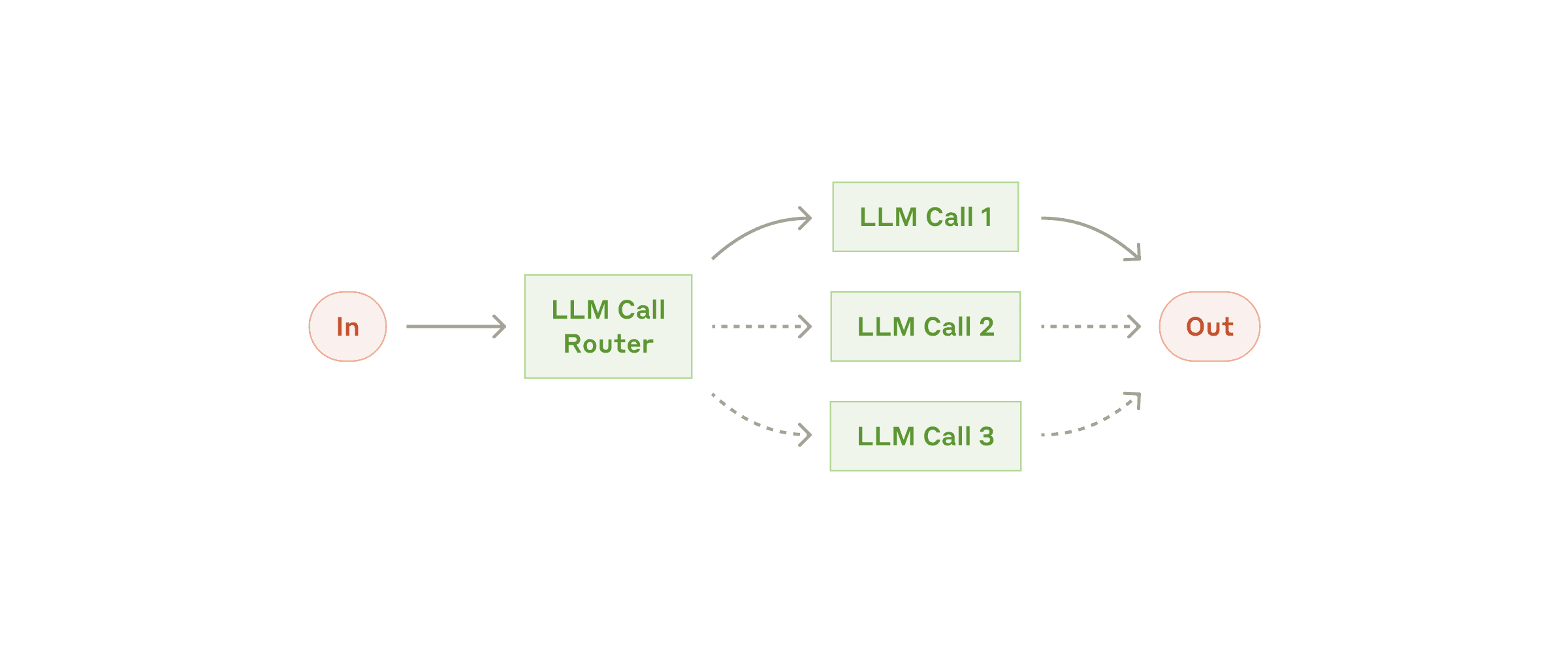

Routing classifies an input and directs it to a specialized followup task. This workflow allows for separation of concerns, and building more specialized prompts. Without this workflow, optimizing for one kind of input can hurt performance on other inputs.

路由机制会对输入进行分类,并将其导向专门的后续任务。这种工作流有助于实现关注点分离,并构建更具针对性的提示词。若不采用路由机制,在优化某一类输入表现的同时,可能会损害对其他类型输入的处理效果。

The routing workflow

When to use this workflow: Routing works well for complex tasks where there are distinct categories that are better handled separately, and where classification can be handled accurately, either by an LLM or a more traditional classification model/algorithm.

何时使用此工作流:当面对复杂任务,且存在明显可区分的类别、各需独立处理,同时分类本身可通过大语言模型或更传统的分类模型/算法准确完成时,路由机制效果显著。

Examples where routing is useful:

Directing different types of customer service queries (general questions, refund requests, technical support) into different downstream processes, prompts, and tools.

Routing easy/common questions to smaller, cost-efficient models like Claude Haiku 4.5 and hard/unusual questions to more capable models like Claude Sonnet 4.5 to optimize for best performance.

路由的典型应用场景包括:

将不同类型的客户服务请求(如一般咨询、退款申请、技术支持)分别引导至不同的下游流程、提示词和工具;

将简单或常见的问题路由至成本更低、效率更高的小型模型(如 Claude Haiku 4.5),而将复杂或罕见的问题交由能力更强的模型(如 Claude Sonnet 4.5)处理,以在性能与成本之间取得最佳平衡。

Workflow: Parallelization(工作流:并行化(Parallelization))

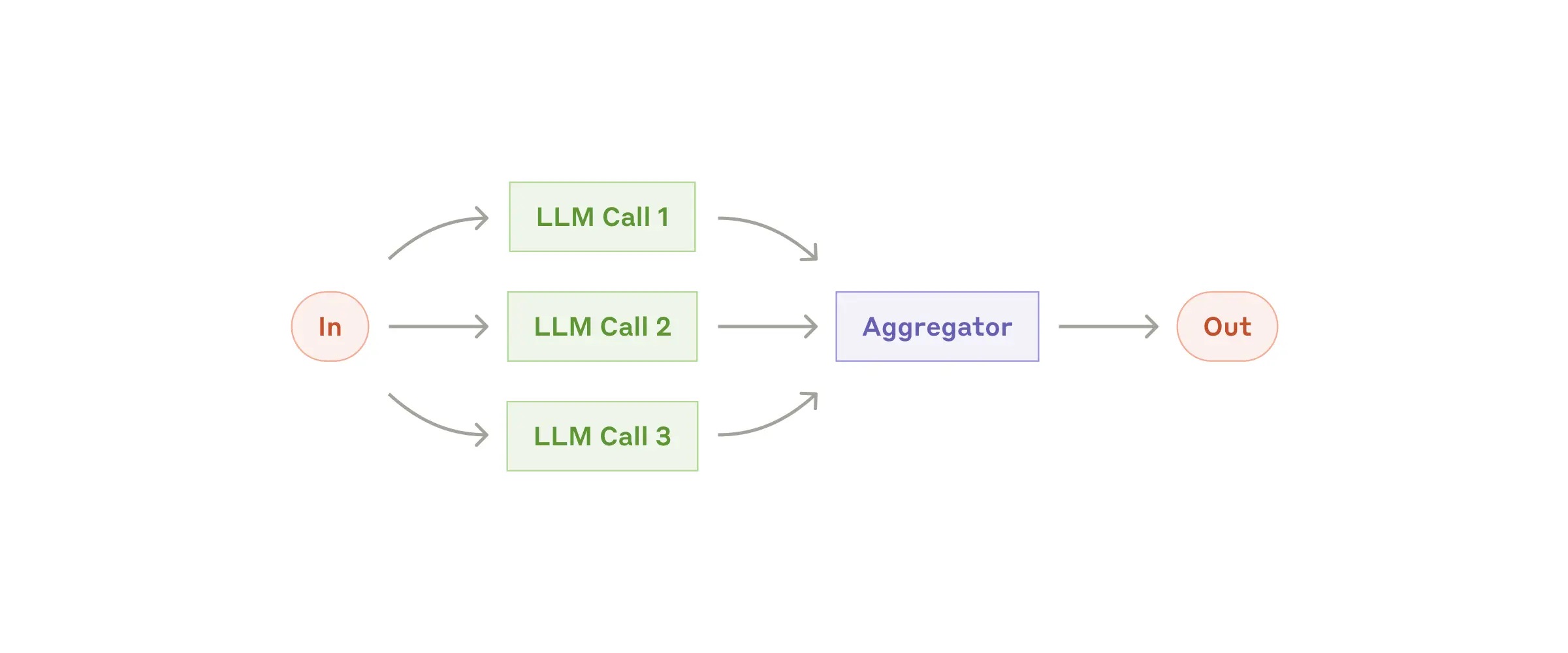

LLMs can sometimes work simultaneously on a task and have their outputs aggregated programmatically. This workflow, parallelization, manifests in two key variations:

Sectioning: Breaking a task into independent subtasks run in parallel.

Voting: Running the same task multiple times to get diverse outputs.

大语言模型有时可同时处理同一任务的不同部分,其输出再通过程序化方式聚合。这种并行化工作流主要有两种关键变体:

分段处理(Sectioning):将任务拆分为多个相互独立的子任务,并行执行;

投票机制(Voting):多次执行相同任务,以获得多样化的输出结果。

The parallelization workflow

When to use this workflow: Parallelization is effective when the divided subtasks can be parallelized for speed, or when multiple perspectives or attempts are needed for higher confidence results. For complex tasks with multiple considerations, LLMs generally perform better when each consideration is handled by a separate LLM call, allowing focused attention on each specific aspect.

何时使用此工作流:当拆分后的子任务可并行执行以提升速度,或当需要多个视角或多次尝试以提高结果置信度时,并行化非常有效。对于涉及多重考量因素的复杂任务,通常让每个因素由单独的大语言模型调用处理,使模型能集中注意力于特定方面,从而获得更优表现。

Examples where parallelization is useful:

Sectioning:

Implementing guardrails where one model instance processes user queries while another screens them for inappropriate content or requests. This tends to perform better than having the same LLM call handle both guardrails and the core response.

Automating evals for evaluating LLM performance, where each LLM call evaluates a different aspect of the model’s performance on a given prompt.

Voting:

Reviewing a piece of code for vulnerabilities, where several different prompts review and flag the code if they find a problem.

Evaluating whether a given piece of content is inappropriate, with multiple prompts evaluating different aspects or requiring different vote thresholds to balance false positives and negatives.

并行化的典型应用场景包括:

分段处理:

实施安全护栏(guardrails):一个模型实例处理用户查询,另一个同时筛查内容是否包含不当请求或敏感信息。这种方式通常优于让单次 LLM 调用同时承担核心响应与安全审查双重职责。

自动化评估 LLM 性能:每次 LLM 调用评估模型在给定提示下表现的某一特定维度。

投票机制:

审查一段代码是否存在漏洞:多个不同提示分别审查代码,一旦发现潜在问题即予以标记;

判断某段内容是否不当:多个提示从不同角度进行评估,或设置不同的投票阈值,以在误报(false positives)与漏报(false negatives)之间取得平衡。

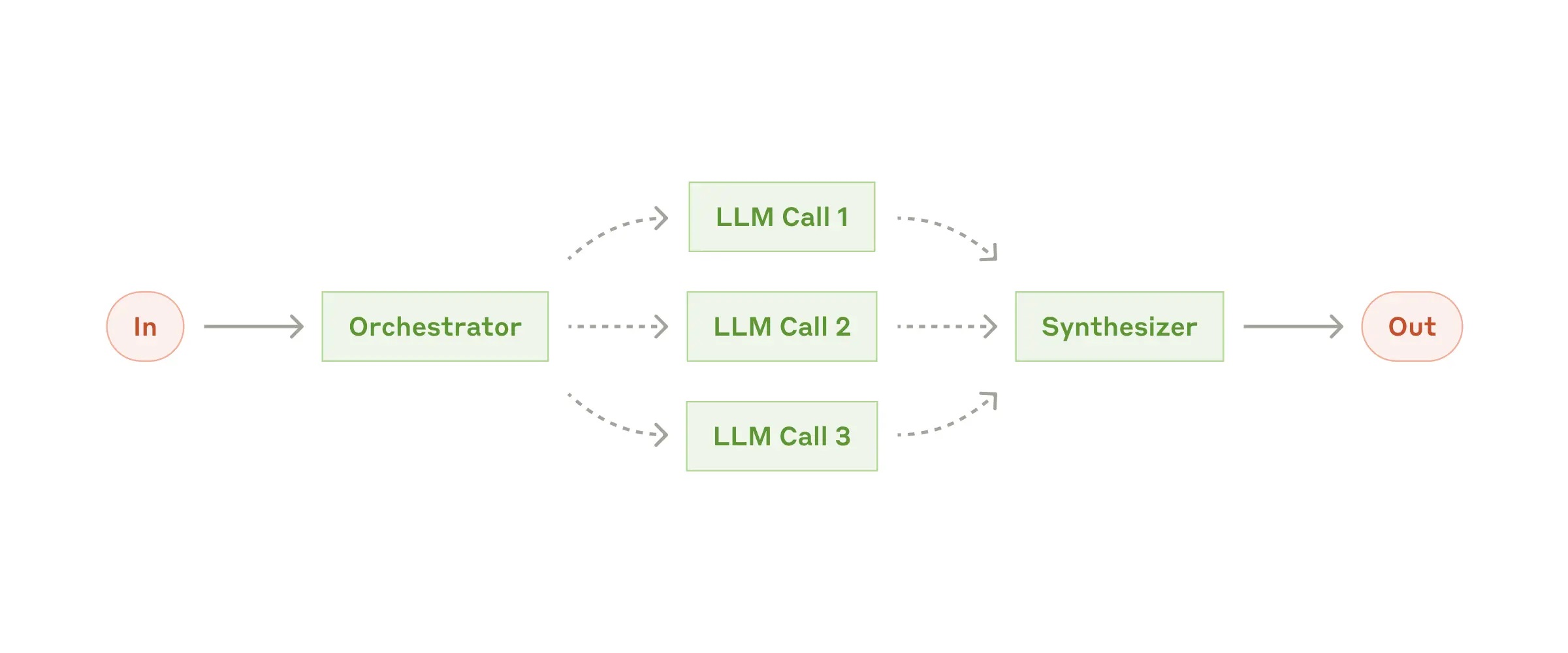

Workflow: Orchestrator-workers(工作流:协调器-工作者(Orchestrator-Workers))

In the orchestrator-workers workflow, a central LLM dynamically breaks down tasks, delegates them to worker LLMs, and synthesizes their results.

在协调器-工作者工作流中,一个中心大语言模型(LLM)会动态地分解任务,将其委派给多个工作者 LLM,并综合它们的输出结果。

The orchestrator-workers workflow

When to use this workflow: This workflow is well-suited for complex tasks where you can’t predict the subtasks needed (in coding, for example, the number of files that need to be changed and the nature of the change in each file likely depend on the task). Whereas it’s topographically similar, the key difference from parallelization is its flexibility—subtasks aren't pre-defined, but determined by the orchestrator based on the specific input.

何时使用此工作流:该工作流适用于那些无法预先确定所需子任务的复杂场景(例如在编程任务中,需要修改的文件数量及每个文件的具体变更内容通常取决于具体任务)。尽管其拓扑结构与并行化类似,但关键区别在于其灵活性——子任务并非预先定义,而是由协调器根据具体输入动态决定。

Example where orchestrator-workers is useful:

Coding products that make complex changes to multiple files each time.

Search tasks that involve gathering and analyzing information from multiple sources for possible relevant information.

协调器-工作者的典型应用场景包括:

每次操作均需对多个文件进行复杂修改的编程类产品;

需从多个来源收集并分析信息以识别潜在相关内容的搜索任务。

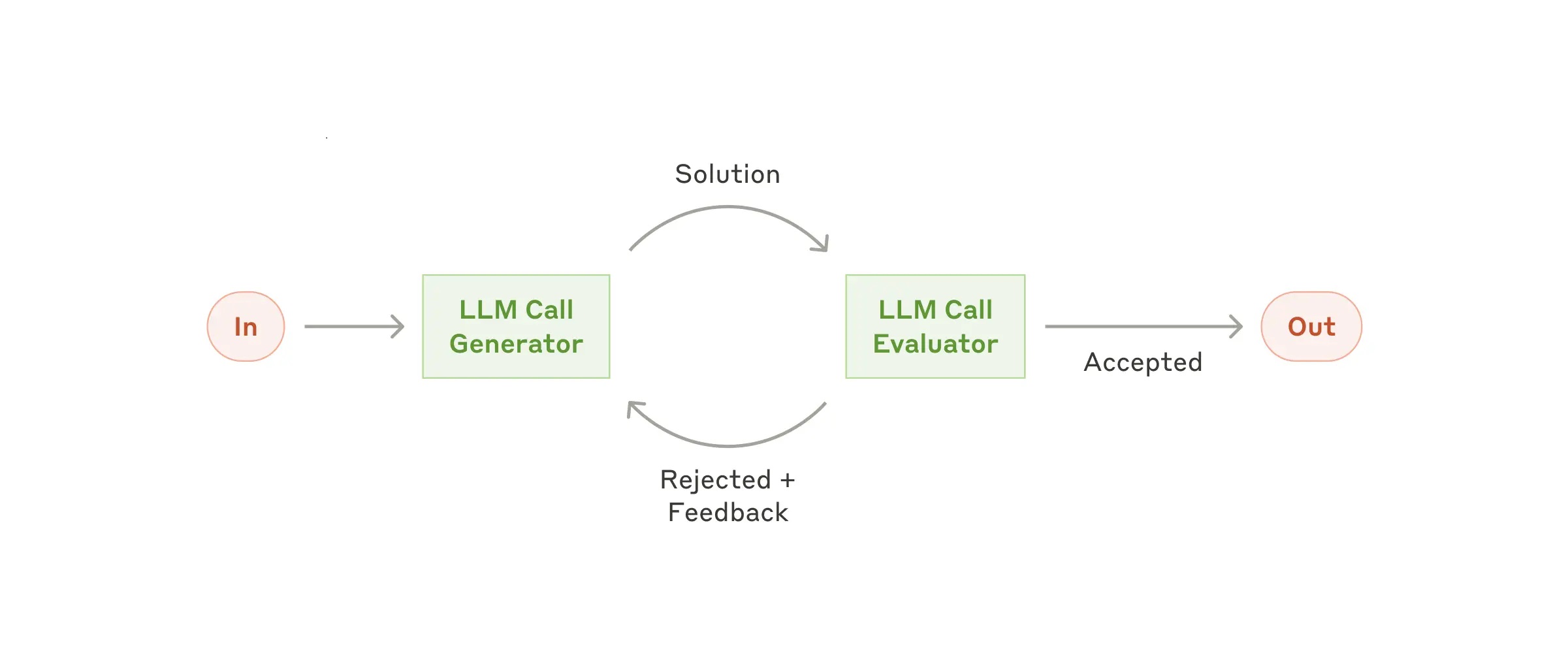

Workflow: Evaluator-optimizer(评估器-优化器(Evaluator-Optimizer))

In the evaluator-optimizer workflow, one LLM call generates a response while another provides evaluation and feedback in a loop.

在评估器-优化器工作流中,一次 LLM 调用生成初始响应,另一次 LLM 调用则在循环中提供评估与反馈,以迭代优化结果。

The evaluator-optimizer workflow

When to use this workflow: This workflow is particularly effective when we have clear evaluation criteria, and when iterative refinement provides measurable value. The two signs of good fit are, first, that LLM responses can be demonstrably improved when a human articulates their feedback; and second, that the LLM can provide such feedback. This is analogous to the iterative writing process a human writer might go through when producing a polished document.

何时使用此工作流:当具备清晰的评估标准,且通过迭代优化能带来可衡量的价值时,该工作流尤为有效。判断其适用性的两个关键信号是:第一,当人类明确表达反馈意见时,LLM 的输出确实能得到显著改进;第二,LLM 本身能够提供此类反馈。这类似于人类作者在打磨一篇精炼文章时所经历的反复修改过程。

Examples where evaluator-optimizer is useful:

Literary translation where there are nuances that the translator LLM might not capture initially, but where an evaluator LLM can provide useful critiques.

Complex search tasks that require multiple rounds of searching and analysis to gather comprehensive information, where the evaluator decides whether further searches are warranted.

评估器-优化器的典型应用场景包括:

文学翻译任务,其中译者 LLM 可能最初未能捕捉某些细微语义,而评估器 LLM 能提供有价值的批评建议;

需要多轮搜索与分析才能全面收集信息的复杂搜索任务,由评估器判断是否有必要继续执行后续搜索。

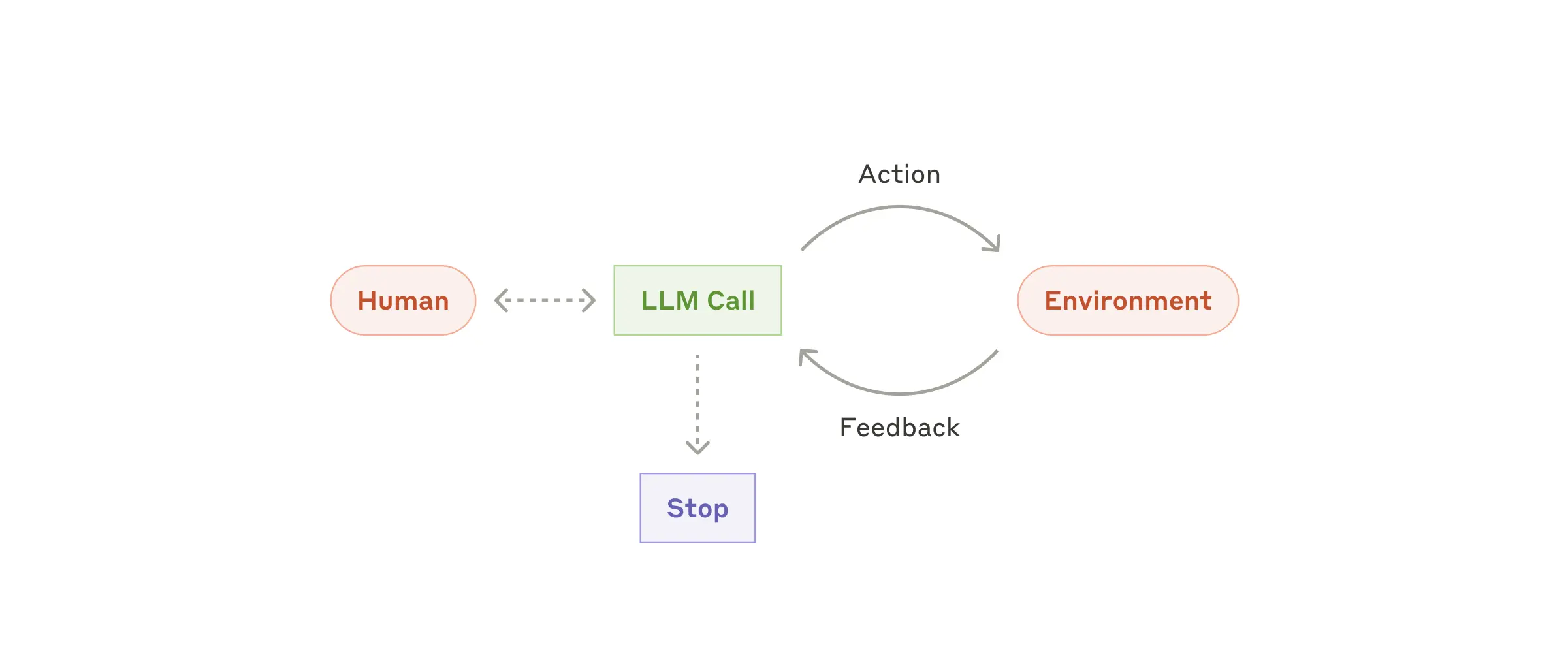

Agents(智能体)

Agents are emerging in production as LLMs mature in key capabilities—understanding complex inputs, engaging in reasoning and planning, using tools reliably, and recovering from errors. Agents begin their work with either a command from, or interactive discussion with, the human user. Once the task is clear, agents plan and operate independently, potentially returning to the human for further information or judgement. During execution, it's crucial for the agents to gain “ground truth” from the environment at each step (such as tool call results or code execution) to assess its progress. Agents can then pause for human feedback at checkpoints or when encountering blockers. The task often terminates upon completion, but it’s also common to include stopping conditions (such as a maximum number of iterations) to maintain control.

随着大语言模型(LLM)在理解复杂输入、进行推理与规划、可靠使用工具以及从错误中恢复等关键能力上的不断成熟,智能体(Agents)正逐步进入生产应用阶段。智能体的工作通常始于用户下达的指令,或与用户进行交互式讨论。一旦任务目标明确,智能体便会自主制定计划并独立执行,必要时可返回向人类用户请求更多信息或判断。在执行过程中,智能体必须在每一步都从环境中获取“真实反馈”(例如工具调用结果或代码执行输出),以评估自身进展。随后,智能体可在预设检查点或遇到障碍时暂停,等待人工反馈。任务通常在完成后终止,但也常设置停止条件(如最大迭代次数)以保持对流程的控制。

Agents can handle sophisticated tasks, but their implementation is often straightforward. They are typically just LLMs using tools based on environmental feedback in a loop. It is therefore crucial to design toolsets and their documentation clearly and thoughtfully. We expand on best practices for tool development in Appendix 2 ("Prompt Engineering your Tools").

智能体能够处理高度复杂的任务,但其实现方式往往相当简洁——通常只是大语言模型在一个循环中根据环境反馈调用工具。因此,清晰且深思熟虑地设计工具集及其文档至关重要。我们在附录2(《为你的工具进行提示工程》)中进一步阐述了工具开发的最佳实践。

Autonomous agent

When to use agents: Agents can be used for open-ended problems where it’s difficult or impossible to predict the required number of steps, and where you can’t hardcode a fixed path. The LLM will potentially operate for many turns, and you must have some level of trust in its decision-making. Agents' autonomy makes them ideal for scaling tasks in trusted environments.

何时使用智能体:当面对开放式问题,即难以甚至无法预知所需步骤数量,且无法硬编码固定执行路径时,智能体尤为适用。此时,大语言模型可能需要进行多轮操作,你必须对其决策能力具备一定程度的信任。正是这种自主性,使智能体非常适合在可信环境中规模化执行任务。

The autonomous nature of agents means higher costs, and the potential for compounding errors. We recommend extensive testing in sandboxed environments, along with the appropriate guardrails.

然而,智能体的自主性也意味着更高的成本和错误累积的风险。我们建议在沙盒环境中进行充分测试,并部署适当的安全护栏(guardrails)。

Examples where agents are useful:

The following examples are from our own implementations:

A coding Agent to resolve SWE-bench tasks, which involve edits to many files based on a task description;

Our “computer use” reference implementation, where Claude uses a computer to accomplish tasks.

智能体的典型应用场景包括(以下示例来自我们自身的实现):

一个用于解决 SWE-bench 任务的编程智能体,该任务要求根据任务描述对多个文件进行修改;

我们的“计算机使用”参考实现,其中 Claude 直接操作计算机完成各类任务

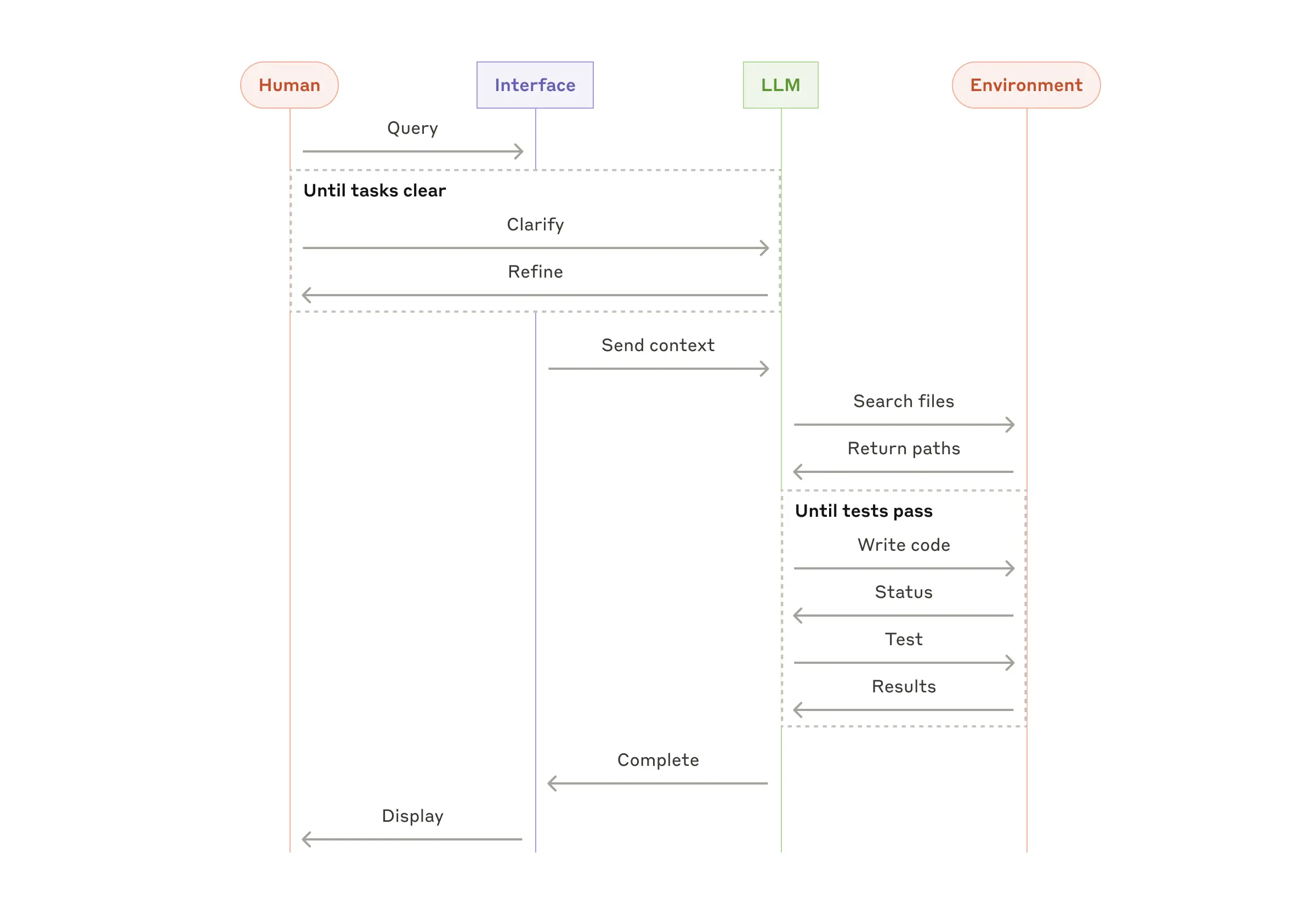

High-level flow of a coding agent(编程智能体的高层流程)

Combining and customizing these patterns(组合与定制这些模式)

These building blocks aren't prescriptive. They're common patterns that developers can shape and combine to fit different use cases. The key to success, as with any LLM features, is measuring performance and iterating on implementations. To repeat: you should consider adding complexity only when it demonstrably improves outcomes.

上述构建模块并非强制性规范,而是开发者可根据不同用例灵活调整和组合的常见模式。与任何 LLM 功能一样,成功的关键在于衡量性能并持续迭代实现方案。再次强调:只有在新增的复杂度能明确带来效果提升时,才应考虑引入它。

Summary(总结)

Success in the LLM space isn't about building the most sophisticated system. It's about building the right system for your needs. Start with simple prompts, optimize them with comprehensive evaluation, and add multi-step agentic systems only when simpler solutions fall short.

在大语言模型(LLM)领域取得成功,并不在于构建最复杂的系统,而在于构建最适合你需求的系统。从简单的提示词开始,通过全面的评估对其进行优化,仅在更简单方案无法满足要求时,才引入多步骤的智能体系统。

When implementing agents, we try to follow three core principles:

Maintain simplicity in your agent's design.

Prioritize transparency by explicitly showing the agent’s planning steps.

Carefully craft your agent-computer interface (ACI) through thorough tool documentation and testing.

在实现智能体时,我们力求遵循三项核心原则:

保持智能体设计的简洁性;

通过明确展示智能体的规划步骤来优先保障透明度;

通过详尽的工具文档和充分测试,精心设计智能体与计算机的交互接口(Agent-Computer Interface, ACI)。

Frameworks can help you get started quickly, but don't hesitate to reduce abstraction layers and build with basic components as you move to production. By following these principles, you can create agents that are not only powerful but also reliable, maintainable, and trusted by their users.

框架虽能帮助你快速起步,但在迈向生产环境时,不要犹豫减少抽象层级,转而使用基础组件进行构建。遵循这些原则,你将能够打造出不仅强大,而且可靠、可维护并赢得用户信任的智能体。

Appendix 1: Agents in practice(附录1:智能体在实践中的应用)

Our work with customers has revealed two particularly promising applications for AI agents that demonstrate the practical value of the patterns discussed above. Both applications illustrate how agents add the most value for tasks that require both conversation and action, have clear success criteria, enable feedback loops, and integrate meaningful human oversight.

我们与客户的合作揭示了两类尤为有前景的人工智能智能体应用场景,充分体现了前文所述模式的实际价值。这两个场景均表明,当任务同时涉及对话与操作、具备明确的成功标准、支持反馈循环,并能融入有意义的人类监督时,智能体最能创造价值。

A. Customer support(客户支持)

Customer support combines familiar chatbot interfaces with enhanced capabilities through tool integration. This is a natural fit for more open-ended agents because:

Support interactions naturally follow a conversation flow while requiring access to external information and actions;

Tools can be integrated to pull customer data, order history, and knowledge base articles;

Actions such as issuing refunds or updating tickets can be handled programmatically; and

Success can be clearly measured through user-defined resolutions.

客户支持将人们熟悉的聊天机器人界面与通过工具集成实现的增强能力相结合。这种场景天然适合更开放式的智能体,原因如下:

支持交互本身具有自然的对话流程,同时需要访问外部信息并执行操作;

可集成工具以获取客户数据、订单历史和知识库文章;

退款发放或工单更新等操作可通过程序化方式处理;

成功与否可通过用户定义的解决结果清晰衡量。

Several companies have demonstrated the viability of this approach through usage-based pricing models that charge only for successful resolutions, showing confidence in their agents' effectiveness.

多家公司已通过按使用效果计费的定价模型(仅对成功解决的问题收费)验证了该方法的可行性,体现出对其智能体效能的信心。

B. Coding agents(编程智能体)

The software development space has shown remarkable potential for LLM features, with capabilities evolving from code completion to autonomous problem-solving. Agents are particularly effective because:

Code solutions are verifiable through automated tests;

Agents can iterate on solutions using test results as feedback;

The problem space is well-defined and structured; and

Output quality can be measured objectively.

在软件开发领域,大语言模型功能展现出非凡潜力——其能力已从代码补全演进至自主解决问题。智能体在此场景中尤为高效,原因在于:

代码解决方案可通过自动化测试进行验证;

智能体可利用测试结果作为反馈,对方案进行迭代优化;

问题空间定义清晰且结构良好;

输出质量可被客观衡量。

In our own implementation, agents can now solve real GitHub issues in the SWE-bench Verified benchmark based on the pull request description alone. However, whereas automated testing helps verify functionality, human review remains crucial for ensuring solutions align with broader system requirements.

在我们自身的实现中,智能体现在仅凭拉取请求(pull request)描述,即可在 SWE-bench Verified 基准测试中解决真实的 GitHub 问题。然而,尽管自动化测试有助于验证功能正确性,人类审查对于确保解决方案符合更广泛的系统需求仍然至关重要。

Appendix 2: Prompt engineering your tools(附录2:为你的工具进行提示工程)

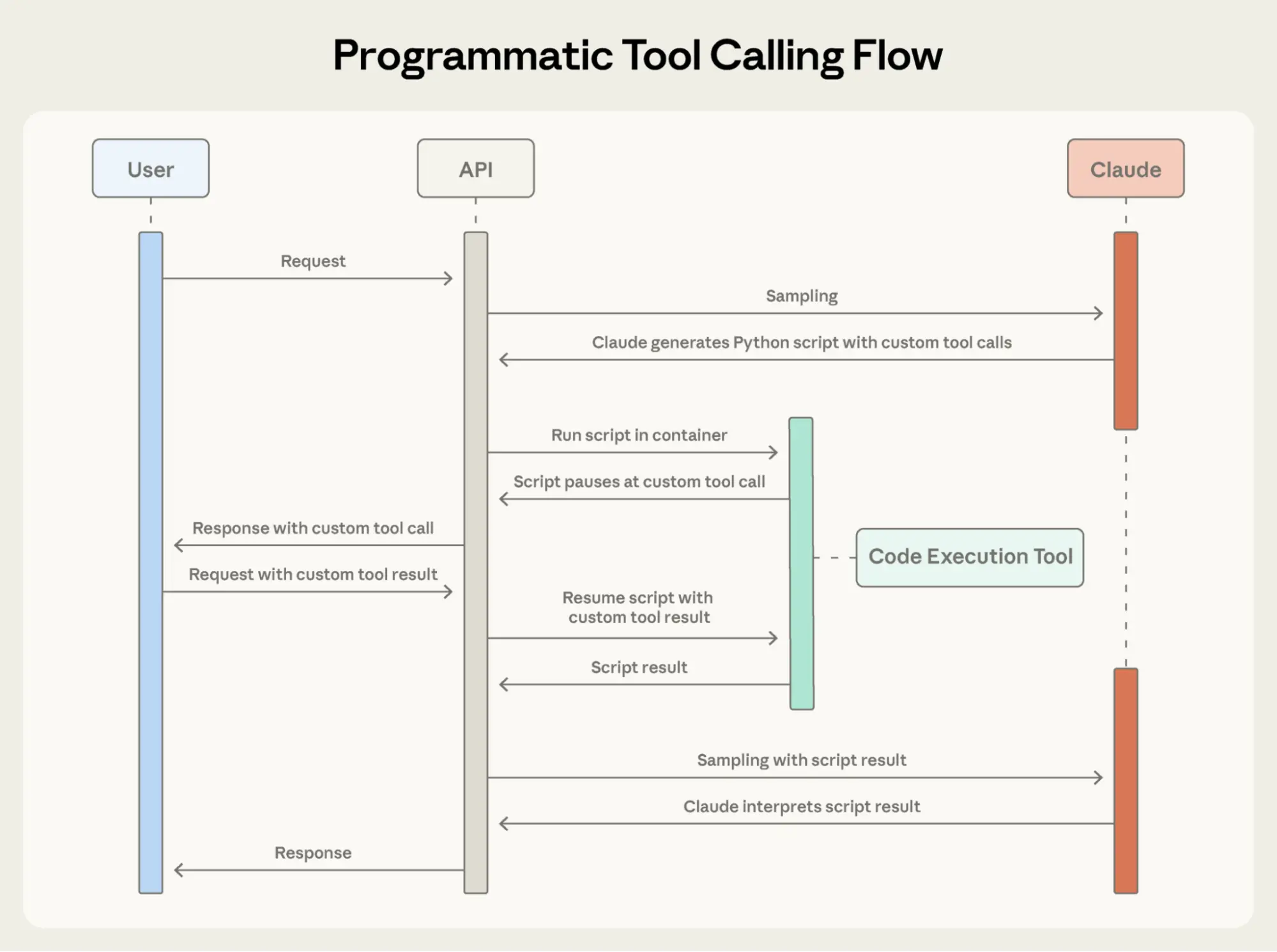

No matter which agentic system you're building, tools will likely be an important part of your agent. Tools enable Claude to interact with external services and APIs by specifying their exact structure and definition in our API. When Claude responds, it will include a tool use block in the API response if it plans to invoke a tool. Tool definitions and specifications should be given just as much prompt engineering attention as your overall prompts. In this brief appendix, we describe how to prompt engineer your tools.

无论你构建何种智能体系统,工具很可能都是其中的关键组成部分。工具使 Claude 能够通过在 API 中明确定义其结构和行为,与外部服务及 API 进行交互。当 Claude 决定调用某个工具时,其 API 响应中会包含一个工具调用(tool use)区块。工具的定义与规范应获得与整体提示词同等程度的提示工程关注。在本简短附录中,我们将说明如何对工具进行提示工程设计。

There are often several ways to specify the same action. For instance, you can specify a file edit by writing a diff, or by rewriting the entire file. For structured output, you can return code inside markdown or inside JSON. In software engineering, differences like these are cosmetic and can be converted losslessly from one to the other. However, some formats are much more difficult for an LLM to write than others. Writing a diff requires knowing how many lines are changing in the chunk header before the new code is written. Writing code inside JSON (compared to markdown) requires extra escaping of newlines and quotes.

同一操作通常有多种表达方式。例如,你可以通过生成 diff(差异补丁)来指定文件修改,也可以直接重写整个文件;对于结构化输出,你可以将代码放在 Markdown 中,也可以放在 JSON 中。在软件工程中,这类差异往往是形式上的,可无损地相互转换。然而,对大语言模型而言,某些格式远比其他格式更难生成。例如,编写 diff 需要在写出新代码之前就确定代码块头中更改的行数;而在 JSON 中(而非 Markdown)嵌入代码则需要额外转义换行符和引号。

Our suggestions for deciding on tool formats are the following:

Give the model enough tokens to "think" before it writes itself into a corner.

Keep the format close to what the model has seen naturally occurring in text on the internet.

Make sure there's no formatting "overhead" such as having to keep an accurate count of thousands of lines of code, or string-escaping any code it writes.

我们对工具格式选择的建议如下:

为模型预留足够的 token 用于“思考”,避免过早陷入格式约束;

尽量采用模型在互联网文本中常见、自然出现的格式;

确保没有不必要的格式“开销”,例如要求精确统计数千行代码,或对所生成的代码进行复杂的字符串转义。

One rule of thumb is to think about how much effort goes into human-computer interfaces (HCI), and plan to invest just as much effort in creating good agent-computer interfaces (ACI). Here are some thoughts on how to do so:

Put yourself in the model's shoes. Is it obvious how to use this tool, based on the description and parameters, or would you need to think carefully about it? If so, then it’s probably also true for the model. A good tool definition often includes example usage, edge cases, input format requirements, and clear boundaries from other tools.

How can you change parameter names or descriptions to make things more obvious? Think of this as writing a great docstring for a junior developer on your team. This is especially important when using many similar tools.

Test how the model uses your tools: Run many example inputs in our workbench to see what mistakes the model makes, and iterate.

Poka-yoke your tools. Change the arguments so that it is harder to make mistakes.

一个经验法则是:思考人类在人机交互(HCI)上投入了多少精力,并计划在构建优质的智能体-计算机接口(Agent-Computer Interface, ACI)上投入同等努力。以下是一些具体建议:

设身处地站在模型的角度思考:仅凭工具描述和参数,使用方式是否一目了然?还是需要仔细推敲?如果是后者,模型很可能也会遇到同样困难。优秀的工具定义通常包含使用示例、边界情况、输入格式要求,以及与其他工具的清晰区分。

如何调整参数名称或描述,使其更加直观?可将其视为为团队中的初级开发者撰写一份出色的文档字符串(docstring)。当你使用多个相似工具时,这一点尤为重要。

测试模型如何使用你的工具:在我们的 Workbench 中运行大量示例输入,观察模型常犯哪些错误,并持续迭代优化。

对工具进行“防错设计”(Poka-yoke):调整参数设计,使错误更难发生。

While building our agent for SWE-bench, we actually spent more time optimizing our tools than the overall prompt. For example, we found that the model would make mistakes with tools using relative filepaths after the agent had moved out of the root directory. To fix this, we changed the tool to always require absolute filepaths—and we found that the model used this method flawlessly.

在为 SWE-bench 构建智能体时,我们实际上花在工具优化上的时间超过了整体提示词的设计。例如,我们发现当智能体离开根目录后,使用相对路径的工具容易出错。为此,我们将工具修改为始终要求绝对路径——结果发现模型使用这一方式时表现完美无瑕。

发表评论 取消回复